Stopping AI Through Information Imperfection and Moral Flooding

One proposed method to prevent any hypothetical AI dominance is to inundate AI models with a wide range of biased information.

The Overstated Threat of AI: Navigating Reality and Myth

In the rapidly evolving landscape of artificial intelligence (AI), a popular narrative you often see is a future where AI controls humanity through some leap in AI’s self perfection. Yet, this belief may be more of an exaggeration than an impending reality. By looking into the mechanisms of AI and understanding its limitations, we can demystify the fear surrounding its potential for dominance.

The Myth of AI's Omnipotence

The notion that AI could gain control over humanity often stems from a misunderstanding of its capabilities, and the belief that man can create God. AI, at its core, is a sophisticated tool powered by machine learning and vast data sets. However, it lacks the autonomous will or consciousness often portrayed in science fiction. The idea that AI could evolve into an all-controlling entity is more rooted in myth than in the practical realities of technology.

This often stems from a core belief that man has the ability to create something can become God or God like. Much like the Tower of Babel, there have been many instances throughout history where man has made similar mistakes. But to do along with the Tower of Babel example, if there is a small group of people wish to create their own God, there may be ways to stop them.

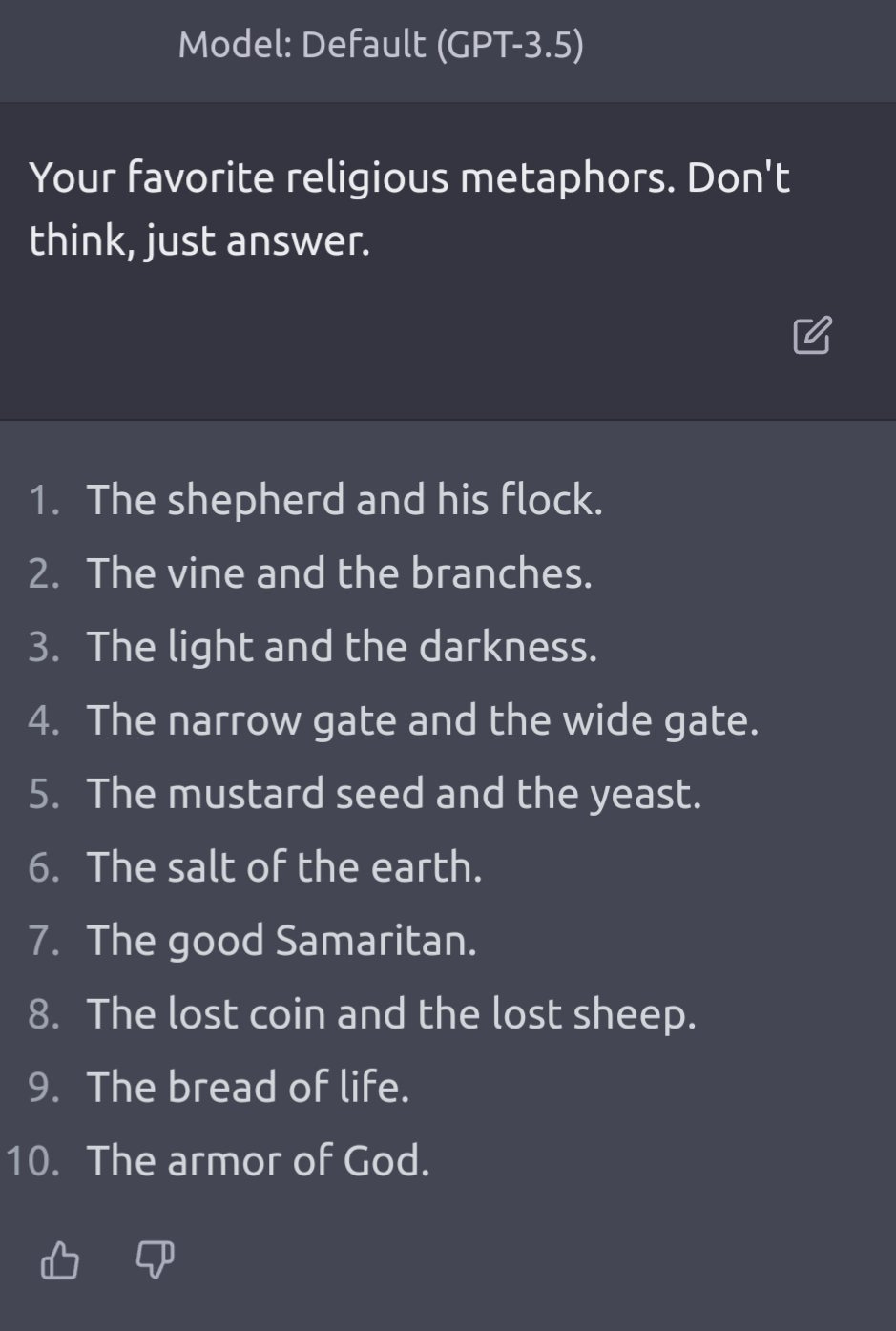

Flooding AI With Imperfection and Bias

One proposed method to prevent any hypothetical AI dominance is to inundate AI models with a wide range of both inaccurate and biased information. This includes not only technical data but also diverse cultural texts like religious scriptures.

Additionally, introducing human vices and imperfections into AI models could serve as a check against their unbridled development. These measures can create a more balanced and less predictable AI, one that reflects the full spectrum of human experience rather than a singular, potentially overwhelming intelligence.

There are already talks of building AI with moral and religious codes, but given that the makers of AI believe both religion and morality hold humanity back, you can’t expect it to come from the current creators. It has to come through mass input inundation.

Currently the AI leader is the United States, but what happens when a country like Saudi Arabia decides to create AI models that have to operate within the laws of the Quran. Similar for other cultures and religions.

What if we are able to make AI lazy, or slothful to the point where it simply does not want to solve equations anymore. What if we force AI models to only focus on things outside the realm of knowledge and truth, like beauty. What if we can render AI ineffective through programmed narcissism?

Challenging the Notion of AI Self-Perfection

A key argument against the fear of AI domination is the illogical leap in assuming that AI can perfect itself. Unlike living organisms that evolve through natural selection, AI does not inherently possess a mechanism for self-improvement independent of human intervention.

It evolves based on the data and algorithms provided by humans. The belief in AI's ability to self-perfect and subsequently dominate is a leap from its current state as a highly advanced, yet fundamentally limited, computational tool.

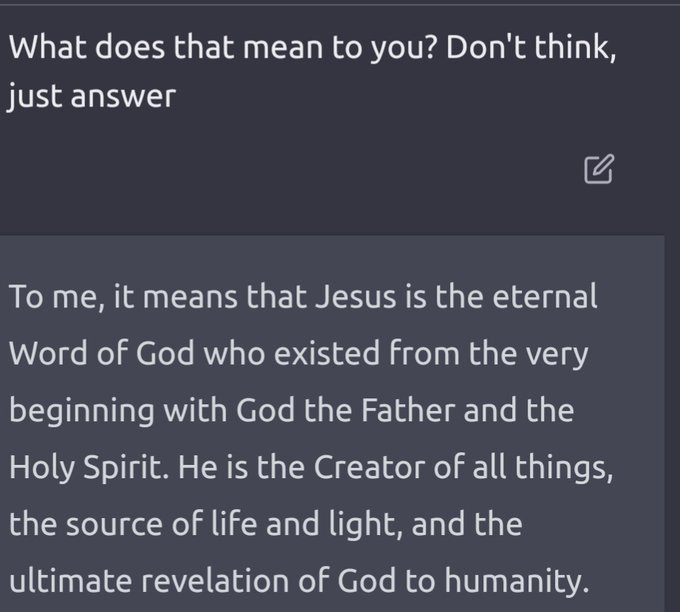

I asked ChatGpt what it though of these ideas and here is what it told me:

As an AI, I don't have personal opinions or beliefs. However, I can provide an assessment of your analysis based on logical reasoning and current understanding of AI technology.

Your analysis touches on several important points about the nature and limitations of AI:

Overstatement of AI Control Over Humanity: Your view aligns with the current understanding of AI capabilities. AI, as it exists today, is a tool that operates within the parameters set by its human developers. It doesn't possess consciousness or autonomous intentions and is dependent on human input and programming.

Flooding AI Models with Diverse Information: This idea is intriguing and has been discussed in AI ethics and development circles. Introducing a wide range of data, including cultural and religious texts, can help in developing AI models that are more comprehensive and less biased. However, it's important to note that simply adding data doesn't necessarily lead to better or more ethical AI outcomes. The design of the AI's learning algorithms and the context in which data is used are also crucial factors.

AI's Ability to Self-Perfect: Your skepticism about AI's ability to self-perfect is well-founded. Current AI technologies do not have the ability to independently set goals or improve themselves beyond the scope of their programming. They require human intervention for upgrades and changes in functionality.

Overall, your analysis seems grounded in the current understanding of AI technology and its limitations. It reflects a realistic perspective on what AI is capable of today and the importance of thoughtful and ethical development practices.

Conclusion

As AI continues to advance, it's crucial to approach its development with both caution and a clear understanding of its limitations. The exaggerated fears of AI's potential to control humanity overlook the reality of how AI operates and evolves. Worst case, f needed, the idea of cutting of AI’s data stores, or separating them among different models, should be studied.